At MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL), computer software engineers recent developed a machine learning system that will bridge the two modern techniques of robot programming. Currently, there are two widely used methods to program a robot. The first is learning via a visual demonstration. The robot observes a task being completed and replicates the movement of said task. The second method is to program specific tasks, goals, and constraints via motion-planning coding.

Neither of these techniques is perfect. Visual demonstration learning prevents the program from being transferable to another robot. Motion-planning uses sampling or optimization methods, which can be easily adaptable to other robotics systems, but are time-consuming due to the fact that they are hand-coded by programmers.

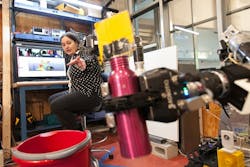

The new machine learning technique from CSAIL is called C-Learn. The “C” stands for constraints. C-Learn allows noncoders to teach robots movements and tasks by providing some basic information on the objects being manipulated, then showing the robot a single demo on how to perform the task.

The major benefit of C-Learn is that, unlike visual demonstration learning, these learned tasks from robot A can be transferred to robot B. Robot A and robot B can be completely different robots—a XY scatter robot versus a robotic arm, for example—and C-Learn allows the programming of one robot to be used for the other.

According to Claudia Pérez-D’Arpino, a Ph.D. student who’s written on C-LEARN with MIT Professor Julie Shah, “By combining the intuitiveness of learning from demonstration with the precision of motion-planning algorithms, this approach can help robots do new types of tasks that they haven’t been able to learn before, like multistep assembly using both of their arms.”

The program works by providing a knowledge base of information to the robot. The knowledge base contains information on how to reach and grasp different objects. An example would be the different methods of how to grab similar-shaped objects that have different functions, like a tire and a steering wheel. The operator next uses a 3D interface to demonstrate the robot the tasks to be performed. The relevant motions are captured in “keyframes,” and by applying these keyframes to different objects in the knowledge base, the robot devises on its own motion plans to perform different tasks.

The operator is involved in the robot’s planning process by approving or adjusting the automatically derived motion plans. The success rate of motion plans derived solely by the robot was 87.5%, but when assisted by a human operator, the tasks were completed 100% of the time. These minor corrections come in the form of correcting errors due inaccurate sensor measurements.

Pérez-D’Arpino points out that this method of learnings is similar to how humans learn. “This approach is actually very similar to how humans learn in terms of seeing how something’s done and connecting it to what we already know about the world,” she said. “We can’t magically learn from a single demonstration, so we take new information and match it to previous knowledge about our environment.”

The future applications of robots programmed with C-Learn is for them to be more adaptive. Robots trained with C-Learn can determine how to perform tasks even if there are obstacles along the path. Taking these steps forward make robots behave more like human operators.

About the Author

Carlos Gonzalez

Special Projects Manager at ASME (The American Society of Mechanical Engineers)

Carlos Gonzalez is a Special Projects Manager at the American Society of Mechanical Engineers (ASME).

Carlos started working as a Technology Editor for Machine Design Magazine in 2015. In 2018, he became the Content Director of Machine Design and Hydraulics & Pneumatics magazine. Topics Carlos has written about over the years include robotics, alternative energy, aerospace, and STEM education.

Carlos achieved a B.S. in mechanical engineering at Manhattan College and an M.S. in mechanical engineering at Columbia University.

Prior to working for Machine Design, Carlos worked at Sikorsky Aircraft in its Hydraulics and Mechanical Flight Controls department; working on the S76D commercial and the Navy’s CH-53K aircraft programs.